Or how a single word can have a trunkful of meanings.

"Liked your blog post. It was so random.” That, believe it or not, is one of the nicest things anyone has ever said to me. You may think it funny that I see this as a compliment. But truth be told, randomness is part of my mental DNA — as anyone who has attempted to hold a conversation with me can attest. Even Google seems to agree. A few years ago, they temporarily closed my Blogger account because, according to their algorithms, my posts consisted of random, machine-generated words. I kid you not.

So why am I going on about this? Well, someone asked me about QNX Software Systems’ experience in the automotive market and, sure enough, my mind went off in several directions all at once. Not that that’s unusual. In this case, however, there was justification for my response. Because when it comes to cars and QNX, experience has a rich array of meanings.

First, there is the deep experience that QNX amassed in the automotive industry. We’ve been at it for 15 years, working hand-in-hand with car makers and tier one suppliers to create infotainment systems, digital instrument clusters, connectivity modules, and handsfree units for tens of millions of vehicles.

Next, there’s the experience of working with QNX the company. In the auto industry, almost every automaker and tier one supplier has unique demands — not to mention immovable deadlines. As a result, they need a supplier, like QNX, that’s deeply committed to the success of their projects, and that can provide the expert engineering services they need to meet start-of-production commitments. No shrink-wrapped solutions for this crowd.

Then, there’s the experience of using QNX technology to build automotive systems — or any type of system, for that matter. Take the QNX OS, for example. Its microkernel architecture makes it easier to isolate and repair bugs, its industry-standard APIs make it easy to port or reuse existing code, and its persistent publish/subscribe technology offers a highly flexible approach to integrating high-level applications with low-level business logic and services.

And last, there’s the experience of using systems based on QNX technology. One reason we build technology concept cars is because words cannot express the rich, integrated user experiences that our technology can enable — experiences that blend graphics, acoustics, touch interfaces, natural language processing, and other technologies to make driving simpler and more convenient.

Nor can words express the sheer variety of user experiences that our platform makes possible. If you look at the QNX-powered infotainment systems that automakers ship today, it soon becomes obvious that they aren’t cookie-cutter systems. Rather, each system projects the unique values, features, and brand identity of the automaker. For evidence, look no further than GM OnStar and the Audi Virtual Cockpit. They are totally distinct from each other, yet both are built on the very same OS platform.

On a personal note, I must mention one last form of experience: that of working with my QNX colleagues. Because that, to me, is the most wonderful experience of all.

One day I’ll be Luke Skywalker

|

| Cyril Clocher |

As we all begin preparing for our trek to Vegas for CES 2015, I would like my young friends (born in the 70s, of course) to reflect on their impressions of the first episode of Lucas’s trilogy back in 1977. On my side, I perfectly remember thinking one day I would be Luke Skywalker.

The eyes of young boys and girls were literally amazed by this epic space opera and particularly by technologies used by our heroes to fight the Galactic Empire. You have to remember it was an era where we still used rotary phones and GPS was in its infancy. So you can imagine how impactful it was for us to see our favorite characters using wireless electronic gadgets with revolutionary HMIs such as natural voice recognition, gesture controls or touch screens; droids speaking and enhancing human intelligence; and autonomous vehicles traveling the galaxy safely while playing chess with a Wookiee. Now you’re with me…

But instead of becoming Luke Skywalker a lot of us realized that we would have a bigger impact by inventing or engineering these technologies and by transforming early concepts into real products we all use today. As a result, smartphones and wireless connectivity are now in our everyday lives; the Internet of Things (IoT) is getting more popular in applications such as activity trackers that monitor personal metrics; and our kids are more used to touch screens than mice or keyboards, and cannot think of on-line gaming without gesture control. In fact, I just used voice recognition to upgrade the Wi-Fi plan from my Telco provider.

But the journey is not over yet. Our generation has still to deliver an autonomous vehicle that is green, safe, and fun to control – I think the word “drive” will be obsolete for such a vehicle.

The automotive industry has taken several steps to achieve this exciting goal, including integration of advanced and connected in-car infotainment systems in more models as well as a number of technologies categorized under Advanced Driver Assistance Systems (ADAS) that can create a safer and unique driving experience. From more than a decade, Texas Instruments has invested in infotainment and ADAS: “Jacinto” and TDAx automotive processors as well as the many analog companion chips supporting these trends.

|

| "Jacinto 6 EP" and "Jacinto 6 Ex" infotainment processors |

For the TI’s automotive team, the CES 2015 show is even more exciting than in previous years, as we’ve taken our concept of informational ADAS to the next step. With joint efforts and hard work from both TI and QNX teams, we’ve together implemented a real informational ADAS system running the QNX CAR™ Platform for Infotainment on a “Jacinto 6 Ex” processor.

I could try describing this system in detail, but just like the Star Wars movies, it’s best to experience our “Jacinto 6 Ex” and QNX CAR Platform-based system in person. Contact your TI or QNX representative today and schedule a meeting to visit our private suite at CES at the TI Village (N115-N119) or to immerse yourself in a combined IVI, cluster, megapixel surround view, and DLP® based HUD display with augmented reality running on a single “Jacinto 6 Ex” SoC demonstration. And don't forget to visit the QNX booth (2231), where you can see the QNX reference vehicle running a variety of ADAS and infotainment applications on “Jacinto 6” processors.

|

| Integrated cockpit featuring DLP powered HUD and QNX CAR Platform running on a single “Jacinto 6 Ex” SoC. |

Labels:

ADAS,

Autonomous cars,

CES,

HMIs,

Infotainment,

QNX CAR,

Reference vehicle,

Texas Instruments

QNX celebrates crystal anniversary in automotive

Long-term success in the auto market relies on a potent mix of passion, persistence, innovation, and quality. And let's not forget trust.

Imagine, for a minute, that you are a bird. Not just any bird, but a bird that can fly 11,000 kilometers, non-stop, without food or rest.

That’s hard to imagine, I know. But the bird in question — the bar-tailed godwit — is very real, and its ability to fly across vast distances is well documented. Every year, as winter approaches, the godwit lifts off from its breeding grounds in Alaska, bears southwest, and doesn't stop beating its wings until it touches down in New Zealand. Total uninterrupted flight time: 216 hours.

The godwit epitomizes indomitable drive, infused with a dose of pure stick-with-it-ness. Qualities that, to me, characterize QNX Software Systems’ success in the auto market — a story that took flight 15 years ago.

It all started in 1999, when Motorola and QNX unveiled mobileGT, an automotive reference platform based on the QNX Neutrino OS. For the first time, QNX publicly threw its hat into the automotive ring. Mind you, QNX was already busy behind the scenes: 1999 also marked the first year that QNX technology shipped in passenger vehicles. It’s been a steady climb ever since, and you can now find QNX technology in tens of millions of vehicles.

There are many technical reasons why QNX has become a premier software provider for the automotive market. But for automakers and their tier one suppliers, technology alone isn’t enough. They also need to know that, as a supplier, you are deeply committed to the success of their projects — like the flight of the godwit, bailing out halfway isn’t an option. They also need to trust that, when you say you’ll do something, you will. And that you’ll do it on time. Even if you have to cross an ocean to do it.

In short, you might enter this market because of your skills and passion, but you thrive in it because you behave as a real partner, working in concert with your customers and fellow technology suppliers. That’s why I refer to our fifteenth anniversary in the car business with the same language used to describe a fifteenth wedding anniversary. Because we’re committed, we’re passionate, and we’re in for the long haul.

Imagine, for a minute, that you are a bird. Not just any bird, but a bird that can fly 11,000 kilometers, non-stop, without food or rest.

That’s hard to imagine, I know. But the bird in question — the bar-tailed godwit — is very real, and its ability to fly across vast distances is well documented. Every year, as winter approaches, the godwit lifts off from its breeding grounds in Alaska, bears southwest, and doesn't stop beating its wings until it touches down in New Zealand. Total uninterrupted flight time: 216 hours.

The godwit epitomizes indomitable drive, infused with a dose of pure stick-with-it-ness. Qualities that, to me, characterize QNX Software Systems’ success in the auto market — a story that took flight 15 years ago.

|

| Bar-tailed godwit: long-distance champion © Andreas Trepte |

There are many technical reasons why QNX has become a premier software provider for the automotive market. But for automakers and their tier one suppliers, technology alone isn’t enough. They also need to know that, as a supplier, you are deeply committed to the success of their projects — like the flight of the godwit, bailing out halfway isn’t an option. They also need to trust that, when you say you’ll do something, you will. And that you’ll do it on time. Even if you have to cross an ocean to do it.

In short, you might enter this market because of your skills and passion, but you thrive in it because you behave as a real partner, working in concert with your customers and fellow technology suppliers. That’s why I refer to our fifteenth anniversary in the car business with the same language used to describe a fifteenth wedding anniversary. Because we’re committed, we’re passionate, and we’re in for the long haul.

Labels:

Paul Leroux

The power of together

Bringing more technologies into the car is all well and good. The real goal, however, is to integrate them in a way that genuinely improves the driving experience.

Can we all agree that ‘synergy’ has become one of the most misused and overused words in the English language? In the pantheon of verbal chestnuts, synergy holds a place of honor, surpassed only by ‘best practices’ and ‘paradigm shift’.

Mind you, you can’t blame people for invoking the word so often. Because, as we all know, the real value in things often comes from their interaction — the moment they stop acting alone and start working in concert. The classic example is water, yeast, and flour, a combination that yields something far more flavorful than its constituent parts. I am speaking, of course, of bread.

Automakers get this principle. Case in point: adaptive cruise control, which takes a decades-old concept — conventional cruise control — and marries it with advances in radar sensors and digital signal processing. The result is something that doesn’t simply maintain a constant speed, but can help reduce accidents and, according to some research, traffic jams.

At QNX Software Systems, we also take this principle to heart. For example, read my recent post on the architecture of the QNX CAR Platform and you’ll see that we consciously designed the platform to help things work together. In fact, the platform's ability to integrate numerous technologies, in a seamless and concurrent fashion, is arguably its most salient quality.

This ability to blend disparate technologies into a collaborative whole isn't just a gee-whiz feature. Rather, it is critical to enabling the continued evolution and success of the connected car. Because it’s not enough to have smartphone connectivity. Or cloud connectivity. Or digital instrument clusters. Or any number of ADAS features, from collision warnings to autonomous braking. The real magic, and real value to the consumer, occurs when some or all of these come together to create something greater than the sum of the parts.

Simply put, it's all about the — dare I say it? — synergy that thoughtful integration can offer.

At CES this year, we will explore the potential of integration and demonstrate the unexpected value it can bring. The story begins on the QNX website.

Can we all agree that ‘synergy’ has become one of the most misused and overused words in the English language? In the pantheon of verbal chestnuts, synergy holds a place of honor, surpassed only by ‘best practices’ and ‘paradigm shift’.

Mind you, you can’t blame people for invoking the word so often. Because, as we all know, the real value in things often comes from their interaction — the moment they stop acting alone and start working in concert. The classic example is water, yeast, and flour, a combination that yields something far more flavorful than its constituent parts. I am speaking, of course, of bread.

Automakers get this principle. Case in point: adaptive cruise control, which takes a decades-old concept — conventional cruise control — and marries it with advances in radar sensors and digital signal processing. The result is something that doesn’t simply maintain a constant speed, but can help reduce accidents and, according to some research, traffic jams.

At QNX Software Systems, we also take this principle to heart. For example, read my recent post on the architecture of the QNX CAR Platform and you’ll see that we consciously designed the platform to help things work together. In fact, the platform's ability to integrate numerous technologies, in a seamless and concurrent fashion, is arguably its most salient quality.

This ability to blend disparate technologies into a collaborative whole isn't just a gee-whiz feature. Rather, it is critical to enabling the continued evolution and success of the connected car. Because it’s not enough to have smartphone connectivity. Or cloud connectivity. Or digital instrument clusters. Or any number of ADAS features, from collision warnings to autonomous braking. The real magic, and real value to the consumer, occurs when some or all of these come together to create something greater than the sum of the parts.

Simply put, it's all about the — dare I say it? — synergy that thoughtful integration can offer.

At CES this year, we will explore the potential of integration and demonstrate the unexpected value it can bring. The story begins on the QNX website.

Labels:

ADAS,

CES,

Concept car,

Paul Leroux

First impressions are the most lasting

|

| Lynn Gayowski |

If I were to describe this concept car with one word, I would choose "user-centric". (I love how hyphens can really help in these succinct situations.) We designed the infotainment system and digital instrument cluster with a vision to help drivers interact in new and seamless ways with their vehicles. This concept car is a great example of how QNX technology can enable a more natural user experience.

As we hum a few bars of Sarah McLachlan's classic I Will Remember You, let's look back at some highlights.

The first thing that catches your eye is the matte exterior and stylish lines, exuding just a soupçon of James Bond:

But let's get to the technology. At 21" by 7" the touch screen is a showstopper. It brings a rich, graphical interface to both driver and passenger. This is where you can really see the user-centric design, with options to control the infotainment system with the touch screen, physical buttons, a jog wheel, or voice commands:

We really wanted to use the car to highlight the flexibility of the QNX CAR Platform and how customers can easily modify features using the platform's pre-integrated technologies. A great example of this is the car's navigation system. The car actually has 4 different navigation solutions installed, demonstrating how automakers can choose a solution best suited for a particular geography or language. EB Street Director is featured in this photo:

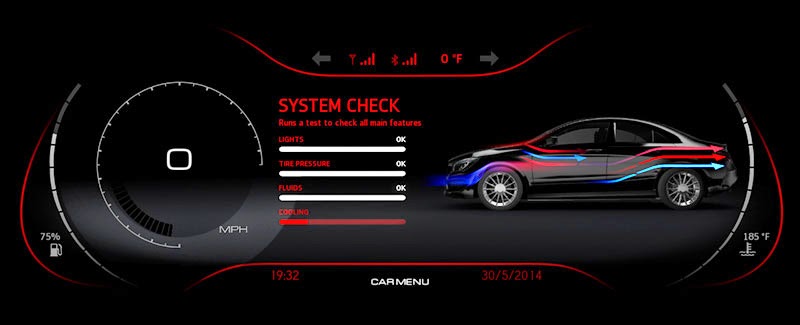

The infotainment system may wow you, but don't forget about the cluster. The Mercedes has a dynamically reconfigurable digital instrument cluster that can display turn-by-turn directions, notifications of incoming phone calls, video from the car's front and rear cameras, as well as a tachometer, speedometer, and other virtual instruments, at a full 60 frames per second. The cluster can even notify you of incoming text messages on your phone. Simply push a steering-wheel button, and the system will read the message aloud, so you can keep your eyes on the road.

Another cool feature is the cluster's "virtual mechanic" which lets you access vehicle info like tire pressure, brake wear, and fuel, oil, and windshield fluid levels:

What car of the future would be complete without connectivity? A custom "key fob" app allows you to remotely access system maintenance information, control the media player, locate the car on a map, and perform a number of actions like starting the car and opening window. This cross-platform HTML5 app can run on any smartphone or tablet:

As an overall view of the Mercedes, one of my favourite pieces is this video by Sami Haj-Assaad of AutoGuide, where he takes a look at the design and features of the car. His closing quote really sums up the innovation showcased: "The infotainment industry is going through a huge upgrade, with QNX leading the charge."

I hope you enjoyed the 2014 QNX technology concept car. Watch for the reveal of our 2015 technology concept car January 6 at CES in Las Vegas!

Cast your vote: which CES show car, past or present, should get a makeover at this year’s show?

|

| Lynn Gayowski |

Starting today, through Monday, January 5, cast your vote on which CES show car, past or present, from QNX Software Systems you would most like to see revamped at this year's show. We will announce the results on Tuesday, January 6 – the first day of the show. Here is our full list of cars:

- The latest — technology concept car based on a Mercedes-Benz CLA54 AMG

- The sound machine — technology concept car for acoustics based on a Kia Soul

- The ultimate show-me car — technology concept car based on a Bentley Continental GT

- The most jazzed-up Jeep ever to hit CES — reference vehicle based on a Jeep Wrangler

- A Porsche you could talk to — technology concept car based on a Porsche 911 Carrera

- A true production car — BMW Z4 Roadster with QNX-powered ConnectedDrive

- The first-ever QNX technology concept car to hit CES — LTE Connected Car based on a Toyota Prius

What will it be — the BMW Z4 Roadster or the Bentley Continental GT? Perhaps it's the LTE Connected Car based on a Toyota Prius or the Kia Soul that we had on display last year?

Let the voting begin!

Labels:

CES,

Concept car,

Lynn Gayowski,

Reference vehicle

Beyond the dashboard: discover how QNX touches your everyday life

QNX technology is in cars — lots of them. But it’s also in everything from planes and trains to smart phones, smart buildings, and smart vacuum cleaners. If you're interested, I happen to have an infographic handy...

I was a lost and lonely soul. Friends would cut phone calls short, strangers would move away from me on the bus, and acquaintances at cocktail parties would excuse themselves, promising to come right back — they never came back. I was in denial for a long time, but slowly and painfully, I came to the realization that I had to take ownership of this problem. Because it was my fault.

To by specific, it was my motor mouth. Whenever someone asked what I did for a living, I’d say I worked for QNX. That, of course, wasn’t a problem. But when they asked what QNX did, I would hold forth on microkernel OS architectures, user-space device drivers, resource manager frameworks, and graphical composition managers, not to mention asynchronous messaging, priority inheritance, and time partitioning. After all, who doesn't want to learn more about time partitioning?

Well, as I subsequently learned, there’s a time and place for everything. And while my passion about QNX technology was well-placed, my timing was lousy. People weren’t asking for a deep dive; they just wanted to understand QNX’s role in the scheme of things.

As it turns out, QNX plays a huge role, and in very many things. I’ve been working at QNX Software Systems for 25 years, and I am still gobsmacked by the sheer variety of uses that QNX technology is put to. I'm especially impressed by the crossover effect. For instance, what we learn in nuclear plants helps us offer a better OS for safety systems in cars. And what we learn in smartphones makes us a better platform supplier for companies building infotainment systems.

All of which to say, the next time someone asks me what QNX does, I will avoid the deep dive and show them this infographic instead. Of course, if they subsequently ask *how* QNX does all this, I will have a well-practiced answer. :-)

Did I mention? You can download a high-res JPEG of this infographic from our Flickr account and a PDF version from the QNX website.

Stay tuned for 2015 CES, where we will introduce even more ways QNX can make a difference, especially in how people design and drive cars.

And lest I forget, special thanks to my colleague Varghese at BlackBerry India for conceiving this infographic, and for the QNX employees who provided their invaluable input.

I was a lost and lonely soul. Friends would cut phone calls short, strangers would move away from me on the bus, and acquaintances at cocktail parties would excuse themselves, promising to come right back — they never came back. I was in denial for a long time, but slowly and painfully, I came to the realization that I had to take ownership of this problem. Because it was my fault.

To by specific, it was my motor mouth. Whenever someone asked what I did for a living, I’d say I worked for QNX. That, of course, wasn’t a problem. But when they asked what QNX did, I would hold forth on microkernel OS architectures, user-space device drivers, resource manager frameworks, and graphical composition managers, not to mention asynchronous messaging, priority inheritance, and time partitioning. After all, who doesn't want to learn more about time partitioning?

Well, as I subsequently learned, there’s a time and place for everything. And while my passion about QNX technology was well-placed, my timing was lousy. People weren’t asking for a deep dive; they just wanted to understand QNX’s role in the scheme of things.

As it turns out, QNX plays a huge role, and in very many things. I’ve been working at QNX Software Systems for 25 years, and I am still gobsmacked by the sheer variety of uses that QNX technology is put to. I'm especially impressed by the crossover effect. For instance, what we learn in nuclear plants helps us offer a better OS for safety systems in cars. And what we learn in smartphones makes us a better platform supplier for companies building infotainment systems.

All of which to say, the next time someone asks me what QNX does, I will avoid the deep dive and show them this infographic instead. Of course, if they subsequently ask *how* QNX does all this, I will have a well-practiced answer. :-)

Did I mention? You can download a high-res JPEG of this infographic from our Flickr account and a PDF version from the QNX website.

Stay tuned for 2015 CES, where we will introduce even more ways QNX can make a difference, especially in how people design and drive cars.

And lest I forget, special thanks to my colleague Varghese at BlackBerry India for conceiving this infographic, and for the QNX employees who provided their invaluable input.

Words to the wise: discover, integrate, trust, and experience

|

| Lynn Gayowski |

At the heart of our CES presence, from our booth theme to show demos, will be four words that encapsulate the key values that QNX Software Systems delivers — discover, integrate, trust, and experience. Each week leading up to CES, we'll highlight one of these words and outline how it relates to the core of QNX Software Systems and its technologies.

We're kicking off the series tomorrow so be sure to check back to read our latest blog post.

Labels:

CES,

Concept car,

Lynn Gayowski,

Reference vehicle

A question of getting there

The third of a series of posts on the QNX CAR Platform. In this installment, we turn to a key point of interest: the platform’s navigation service.

From the beginning, we designed the QNX CAR Platform for Infotainment with flexibility in mind. Our philosophy is to give customers the freedom to choose the hardware platforms, application environments, user-interface tools, and smartphone connectivity protocols that best address their requirements. This same spirit of flexibility extends to navigation solutions.

For evidence, look no further than our current technology concept car. It can support navigation from Elektrobit:

from Nokia HERE:

and from Kotei Informatics:

These are but a few examples. The QNX CAR Platform can also support navigation solutions from companies like AISIN AW, NavNGo, TCS, TeleNav, and ZENRIN DataCom, enabling automakers and automotive Tier 1 suppliers to choose the navigation solution, or solutions, best suited to the regions or demographics they wish to target. (In addition to these embedded solutions, the platform can also provide access to smartphone-based navigation services through its support for MirrorLink and other connectivity protocols — more on this in a subsequent post.)

Under the hood

In our previous installment, we looked at the QNX CAR Platform’s middleware layer, which provides infotainment applications with a variety of services, including Bluetooth, radio, multimedia discovery and playback, and automatic speech recognition. The middleware layer also includes a navigation service that, true to the platform’s overall flexibility, allows developers to use navigation engines from multiple vendors and to change engines without affecting the high-level navigation applications that the user interacts with.

An illustration is in order. If you look the image below, you’ll see OpenGL-based map data rendered on one graphics layer and, on the layer above it, Qt-based application data (current street, distance to destination, and other route information) pulled from the navigation engine. By taking advantage of the platform’s navigation service, you could swap in a different navigation engine without having to rewrite the Qt application:

To achieve this flexibility, the navigation service makes use of the QNX CAR Platform’s persistent/publish subscribe (PPS) messaging, which cleanly abstracts lower-level services from the higher-level applications they communicate with. Let's look at another diagram to see how this works:

In the PPS model, services publish information to data objects; other programs can subscribe to those objects and receive notifications when the objects have changed. So, for the example above, the navigation engine could generate updates to the route information, and the navigation service could publish those updates to a PPS “navigation status object,” thereby making the updates available to any program that subscribes to the object — including the Qt application.

With this approach, the Qt application doesn't need to know anything about the navigation engine, nor does the navigation engine need to know anything about the Qt app. As a result, either could be swapped out without affecting the other.

Here's another example of how this model allows components to communicate with one another:

To give developers a jump start, the QNX CAR Platform comes pre-integrated with Elektrobit’s EB street director navigation software. This reference integration shows developers how to implement "command and control" between the HMI and the participating components, including the navigation engine, navigation service, window manager, and PPS interface. As the above diagram indicates, the reference implementation works with both of the HMIs — one based on HTML5, the other based on Qt — that the QNX CAR Platform supports out of the box.

Previous posts in the QNX CAR Platform series:

From the beginning, we designed the QNX CAR Platform for Infotainment with flexibility in mind. Our philosophy is to give customers the freedom to choose the hardware platforms, application environments, user-interface tools, and smartphone connectivity protocols that best address their requirements. This same spirit of flexibility extends to navigation solutions.

For evidence, look no further than our current technology concept car. It can support navigation from Elektrobit:

from Nokia HERE:

and from Kotei Informatics:

These are but a few examples. The QNX CAR Platform can also support navigation solutions from companies like AISIN AW, NavNGo, TCS, TeleNav, and ZENRIN DataCom, enabling automakers and automotive Tier 1 suppliers to choose the navigation solution, or solutions, best suited to the regions or demographics they wish to target. (In addition to these embedded solutions, the platform can also provide access to smartphone-based navigation services through its support for MirrorLink and other connectivity protocols — more on this in a subsequent post.)

Under the hood

In our previous installment, we looked at the QNX CAR Platform’s middleware layer, which provides infotainment applications with a variety of services, including Bluetooth, radio, multimedia discovery and playback, and automatic speech recognition. The middleware layer also includes a navigation service that, true to the platform’s overall flexibility, allows developers to use navigation engines from multiple vendors and to change engines without affecting the high-level navigation applications that the user interacts with.

An illustration is in order. If you look the image below, you’ll see OpenGL-based map data rendered on one graphics layer and, on the layer above it, Qt-based application data (current street, distance to destination, and other route information) pulled from the navigation engine. By taking advantage of the platform’s navigation service, you could swap in a different navigation engine without having to rewrite the Qt application:

To achieve this flexibility, the navigation service makes use of the QNX CAR Platform’s persistent/publish subscribe (PPS) messaging, which cleanly abstracts lower-level services from the higher-level applications they communicate with. Let's look at another diagram to see how this works:

In the PPS model, services publish information to data objects; other programs can subscribe to those objects and receive notifications when the objects have changed. So, for the example above, the navigation engine could generate updates to the route information, and the navigation service could publish those updates to a PPS “navigation status object,” thereby making the updates available to any program that subscribes to the object — including the Qt application.

With this approach, the Qt application doesn't need to know anything about the navigation engine, nor does the navigation engine need to know anything about the Qt app. As a result, either could be swapped out without affecting the other.

Here's another example of how this model allows components to communicate with one another:

- Using the system's human machine interface (HMI), the drivers asks the navigation system to search for a point of interest (POI) — this could take the form of a voice command or a tap on the system display.

- The HMI responds by writing the request to a PPS “navigation control” object.

- The navigation service reads the request from the PPS object and forwards it to the navigation engine.

- The navigation engine returns the result.

- The navigation service updates the PPS object to notify the HMI that its request has been completed. It also writes the results to a database so that all subscribers to this object can read the results.

To give developers a jump start, the QNX CAR Platform comes pre-integrated with Elektrobit’s EB street director navigation software. This reference integration shows developers how to implement "command and control" between the HMI and the participating components, including the navigation engine, navigation service, window manager, and PPS interface. As the above diagram indicates, the reference implementation works with both of the HMIs — one based on HTML5, the other based on Qt — that the QNX CAR Platform supports out of the box.

Previous posts in the QNX CAR Platform series:

Labels:

Connected Car,

HMIs,

Navigation,

Paul Leroux

A need for speed... and safety

|

| Matt Shumsky |

So this begs the question, what’s the best way to design a software system that ensures the adaptive cruise control system keeps a safe distance from the car ahead? Or that tells the digital instrument cluster the correct information to display? And how can you make sure the display information isn’t corrupted?

Enter QNX and the ISO 26262 functional safety standard.

QNX Software Systems is partnering with LDRA to present a webinar on “Ensuring Automotive Functional Safety”. During this webinar, you’ll learn about:

- Development and verification tools proven to help provide safer automotive software systems

- How suppliers can develop software systems faster with an OS tuned for automotive safety

Ensuring Automotive Functional Safety with QNX and LDRA

Thursday, November 20, 2014

9:00 am PST / 12:00 pm EST / 5:00 pm UTC

I hope you can join us!

Labels:

ADAS,

Autonomous cars,

Safety systems

Building (sound) character into cars

|

| Tina Jeffrey |

Car engines don’t sound like they used to. Correction: They don’t sound as good as they used to. And for that, you can blame modern fuel-saving techniques, such as the practice of deactivating cylinders when engine load is light. Still, if you’re an automaker, delivering an optimal engine sound is critical to ensuring a satisfying user experience. To address this need, we’ve released QNX Acoustics for Engine Sound Enhancement (ESE), a complementary technology to our solution for active noise control.

The why

|

We first demonstrated our ESE technology at 2014 CES in the QNX technology concept car for acoustics. |

ESE isn’t new. Traditionally, automakers have used mechanical solutions that modify the design of the exhaust system or intake pipes to differentiate the sound of their vehicles. Today, automakers are shifting to software-based ESE, which costs less and does a better job at augmenting engine sounds that have been degraded by new, efficient engine designs. With QNX Acoustics for Engine Sound Enhancement, automakers can accurately preserve an existing engine sound for use in a new model, craft a unique sound to market a new brand, or offer distinct sounds associated with different transmission modes, such as sport or economy.

The how

QNX Acoustics for Engine Sound Enhancement is entirely software based. It comprises a runtime library that augments naturally transmitted engine sounds as well as a design tool that provides several advanced features for defining and tuning engine-sound profiles. The library runs on the infotainment system or on the audio system DSP and plays synthesized sound synchronized to the engine’s real-time data: RPM, speed, throttle position, transmission mode, etc.

The ESE designer tool enables sound designers to create, refashion, and audition sounds directly on their desktops by graphically defining the mapping between a synthesized engine-sound profile and real-time engine parameters. The tool supports both granular and additive synthesis, along with a variety of digital signal processing techniques to configure the audio path, including gain, filter, and static equalization control.

The value

QNX Acoustics for Engine Sound Enhancement offers automakers numerous benefits in the design of sound experiences that best reflect their brand:

- Ability to design consistent powertrain sounds across the full engine operating range

- Small footprint runtime library that can be ported to virtually any DSP or CPU running Linux or the QNX OS, making it easy to customize all vehicle models and to leverage work done in existing models

- Tight integration with other QNX acoustics middleware libraries, including QNX Acoustics for Active Noise Control, enabling automakers to holistically shape their interior vehicle soundscape

- Dedicated acoustic engineers that can support development and pre-production activities, including porting to customer-specific hardware, system audio path verification, and platform and vehicle acoustic tuning

In the meantime, learn more about this solution on the QNX website.

Japan update: ADAS, wearables, integrated cockpits, and autonomous cars

|

| Yoshiki Chubachi |

A couple of weeks ago, QNX Software Systems sponsored Telematics Japan in Tokyo. This event offers a great opportunity to catch up with colleagues from automotive companies, discuss technology and business trends, and showcase the latest technology demos. Speaking of which, here’s a photo of me with a Japan-localized demo of the QNX CAR Platform. You can also see a QNX-based digital instrument cluster in the lower-left corner — this was developed by Three D, one of our local technology partners:

While at the event, I spoke on the panel, “Evolving ecosystems for future HMI, OS, and telematics platform development.” During the discussion, we conducted a real-time poll and asked the audience three questions:

1) Do you think having Apple CarPlay and Android Auto will augment a vehicle brand?

2) Do you expect wearable technologies to be integrated into cars?

3) If your rental car were hacked, who would you complain to?

For question 1, 32% of the audience said CarPlay and Android Auto will improve a brand; 68% didn't think so. In my opinion, this result indicates that smartphone connectivity in cars is now an expected feature. For question 2, 76% answered that they expect to see wearables integrated into cars. This response gives us a new perspective — people are looking at wearables as a possible addition to go with ADAS systems. For example, a wearable device could help prevent accidents by monitoring the driver for drowsiness and other dangerous signs. For question 3, 68% said they would complain to the rental company. Mind you, this raises the question: if your own car were hacked, who would you complain to?

Integrated cockpits

There is growing concern around safety and security as companies attempt to grow more business by leveraging connectivity in cars. The trend is apparent if you look at the number of safety- and security-related demos at various automotive shows.

Case in point: I recently attended a private automotive event hosted by Renesas, where many ADAS and integrated cockpit demos were on display. And last month, CEATEC Japan (aka the CES of Japan) featured integrated cockpit demos from companies like Fujitsu, Pioneer, Mitsubishi, Kyocera, and NTT Docomo.

For the joy of it

Things are so different from when I first started developing in-car navigation systems 20 years ago. Infotainment systems are now turning into integrated cockpits. In Japan, the automotive industry is looking at early 2020s as the time when commercially available autonomous cars will be on the road. In the coming years, the in-car environment, including infotainment, cameras and other systems, will change immensely — I’m not exactly sure what cars in the year 2020 will look like, but I know it will be something I could never have imagined 20 years ago.

A panel participant at Telematics Japan said to me, “If autonomous cars become reality and my car is not going to let me drive anymore, I am not sure what the point of having a car is.” This is true. As we continue to develop for future cars, we may want to remind ourselves of the “joy of driving” factor.

A question of architecture

The second of a series on the QNX CAR Platform. In this installment, we start at the beginning — the platform’s underlying architecture.

In my previous post, I discussed how infotainment systems must perform multiple complex tasks, often all at once. At any time, a system may need to manage audio, show backup video, run 3D navigation, synch with Bluetooth devices, display smartphone content, run apps, present vehicle data, process voice signals, perform active noise control… the list goes on.

The job of integrating all these functions is no trivial task — an understatement if ever there was one. But as with any large project, starting with the right architecture, the right tools, and the right building blocks can make all the difference. With that in mind, let’s start at the beginning: the underlying architecture of the QNX CAR Platform for Infotainment.

The architecture consists of three layers: human machine interface (HMI), middleware, and platform.

The HMI layer

The HMI layer is like a bonus pack: it supports two reference HMIs out of the box, both of which have the same appearance and functionality. So what’s the difference? One is based on HTML5, the other on Qt 5. This choice demonstrates the underlying flexibility of the platform, which allows developers to create an HMI with any of several technologies, including HTML5, Qt, or a third-party toolkit such as Elektrobit GUIDE or Crank Storyboard.

Mind you, the choice goes further than that. When you build a sophisticated infotainment system, it soon becomes obvious that no single tool or technology can do the job. The home screen, which may contain controls for Internet radio, hands-free calls, HVAC, and other functions, might need an environment like Qt. The navigation app, for its part, will probably use OpenGL ES. Meanwhile, some applications might be based on Android or HTML5. Together, all these heterogeneous components make up the HMI.

The QNX CAR Platform embraces this heterogeneity, allowing developers to use the best tools and application environments for the job at hand. More to the point, it allows developers to blend multiple app technologies into a single, unified user interface, where they can all share the same display, at the same time.

To perform this blending, the platform employs several mechanisms, including a component called the graphical composition manager . This manager acts as a kind of universal framework, providing all applications, regardless of how they’re built, with a highly optimized path to the display.

For example, look at the following HMI:

Now look at the HMI from another angle to see how it comprises several components blended together by the composition manger:

To the left, you see video input from a connected media player or smartphone. To the right, you see a navigation application based on OpenGL ES map-rendering software, with an overlay of route metadata implemented in Qt. And below, you see an HTML page that provides the underlying wallpaper; this page could also display a system status bar and UI menu bar across all screens.

For each component rendered to the display, the graphical composition manager allocates a separate window and frame buffer. It also allows the developer to control the properties of each individual window, including location, transparency, rotation, alpha, brightness, and z-order. As a result, it becomes relatively straightforward to tile, overlap, or blend a variety of applications on the same screen, in whichever way creates the best user experience.

The middleware layer

The middleware layer provides applications with a rich assortment of services, including Bluetooth, multimedia discovery and playback, navigation, radio, and automatic speech recognition (ASR). The ASR component, for example, can be used to turn on the radio, initiate a Bluetooth phone call from a connected smartphone, or select a song by artist or song title.

I’ll drill down into several of these services in upcoming posts. For now, I’d like to focus on a fundamental service that greatly simplifies how all other services and applications in the system interact with one another. It’s called persistent/publish subscribe messaging, or PPS, and it provides the abstraction needed to cleanly separate high-level applications from low-level business logic and services.

Let’s rewind a minute. To implement communications between software components, C/C++ developers must typically define direct, point-to-point connections that tend to “break” when new features or requirements are introduced. For instance, an application communicates with a navigation engine, but all connections enabling that communication must be redefined when the system is updated with a different engine.

This fragility might be acceptable in a relatively simple system, but it creates a real bottleneck when you are developing something as complex, dynamic, and quickly evolving as the design for a modern infotainment system. PPS addresses the problem by allowing developers to create loose, flexible connections between components. As a result, it becomes much easier to add, remove, or replace components without having to modify other components.

So what, exactly, is PPS? Here’s a textbook answer: an asynchronous object-based system that consists of publishers and subscribers, where publishers modify the properties of data objects and the subscribers to those objects receive updates when the objects have been modified.

So what does that mean? Well, in a car, PPS data objects allow applications to access services such as the multimedia engine, voice recognition engine, vehicle buses, connected smartphones, hands-free calling, and contact databases. These data objects can each contain multiple attributes, each attribute providing access to a specific feature — such as the RPM of the engine, the level of brake fluid, or the frequency of the current radio station. System services publish these objects and modify their attributes; other programs can then subscribe to the objects and receive updates whenever the attributes change.

The PPS service is programming-language independent, allowing programs written in a variety of programming languages (C, C++, HTML5, Java, JavaScript, etc.) to intercommunicate, without any special knowledge of one another. Thus, an app in a high-level environment like HTML5 can easily access services provided by a device driver or other low-level service written in C or C++.

I’m only touching on the capabilities of PPS. To learn more, check out the QNX documentation on this service.

The platform layer

The platform layer includes the QNX OS and the board support packages, or BSPs, that allow the OS to run on various hardware platforms.

A BSP may not sound like the sexiest thing in the world — it is, admittedly, a deeply technical piece of software — but without it, nothing else works. And, in fact, one reason QNX Software Systems has such a strong presence in automotive is that it provides BSPs for all the popular infotainment platforms from companies like Freescale, NVIDIA, Qualcomm, and Texas Instruments.

As for the QNX Neutrino OS, you could write a book about it — which is another way of saying it’s far beyond the scope of this post. Suffice it to say that its modularity, extensibility, reliability, and performance set the tone for the entire QNX CAR Platform. To get a feel for what the QNX OS brings to the platform (and by extension, to the automotive industry), I invite you to visit the QNX Neutrino OS page on the QNX website.

In my previous post, I discussed how infotainment systems must perform multiple complex tasks, often all at once. At any time, a system may need to manage audio, show backup video, run 3D navigation, synch with Bluetooth devices, display smartphone content, run apps, present vehicle data, process voice signals, perform active noise control… the list goes on.

The job of integrating all these functions is no trivial task — an understatement if ever there was one. But as with any large project, starting with the right architecture, the right tools, and the right building blocks can make all the difference. With that in mind, let’s start at the beginning: the underlying architecture of the QNX CAR Platform for Infotainment.

The architecture consists of three layers: human machine interface (HMI), middleware, and platform.

The HMI layer

The HMI layer is like a bonus pack: it supports two reference HMIs out of the box, both of which have the same appearance and functionality. So what’s the difference? One is based on HTML5, the other on Qt 5. This choice demonstrates the underlying flexibility of the platform, which allows developers to create an HMI with any of several technologies, including HTML5, Qt, or a third-party toolkit such as Elektrobit GUIDE or Crank Storyboard.

|

| A choice of HMIs |

The QNX CAR Platform embraces this heterogeneity, allowing developers to use the best tools and application environments for the job at hand. More to the point, it allows developers to blend multiple app technologies into a single, unified user interface, where they can all share the same display, at the same time.

To perform this blending, the platform employs several mechanisms, including a component called the graphical composition manager . This manager acts as a kind of universal framework, providing all applications, regardless of how they’re built, with a highly optimized path to the display.

For example, look at the following HMI:

Now look at the HMI from another angle to see how it comprises several components blended together by the composition manger:

To the left, you see video input from a connected media player or smartphone. To the right, you see a navigation application based on OpenGL ES map-rendering software, with an overlay of route metadata implemented in Qt. And below, you see an HTML page that provides the underlying wallpaper; this page could also display a system status bar and UI menu bar across all screens.

For each component rendered to the display, the graphical composition manager allocates a separate window and frame buffer. It also allows the developer to control the properties of each individual window, including location, transparency, rotation, alpha, brightness, and z-order. As a result, it becomes relatively straightforward to tile, overlap, or blend a variety of applications on the same screen, in whichever way creates the best user experience.

The middleware layer

The middleware layer provides applications with a rich assortment of services, including Bluetooth, multimedia discovery and playback, navigation, radio, and automatic speech recognition (ASR). The ASR component, for example, can be used to turn on the radio, initiate a Bluetooth phone call from a connected smartphone, or select a song by artist or song title.

I’ll drill down into several of these services in upcoming posts. For now, I’d like to focus on a fundamental service that greatly simplifies how all other services and applications in the system interact with one another. It’s called persistent/publish subscribe messaging, or PPS, and it provides the abstraction needed to cleanly separate high-level applications from low-level business logic and services.

|

| PPS messaging provides an abstraction layer between system services and high-level applications |

Let’s rewind a minute. To implement communications between software components, C/C++ developers must typically define direct, point-to-point connections that tend to “break” when new features or requirements are introduced. For instance, an application communicates with a navigation engine, but all connections enabling that communication must be redefined when the system is updated with a different engine.

This fragility might be acceptable in a relatively simple system, but it creates a real bottleneck when you are developing something as complex, dynamic, and quickly evolving as the design for a modern infotainment system. PPS addresses the problem by allowing developers to create loose, flexible connections between components. As a result, it becomes much easier to add, remove, or replace components without having to modify other components.

So what, exactly, is PPS? Here’s a textbook answer: an asynchronous object-based system that consists of publishers and subscribers, where publishers modify the properties of data objects and the subscribers to those objects receive updates when the objects have been modified.

So what does that mean? Well, in a car, PPS data objects allow applications to access services such as the multimedia engine, voice recognition engine, vehicle buses, connected smartphones, hands-free calling, and contact databases. These data objects can each contain multiple attributes, each attribute providing access to a specific feature — such as the RPM of the engine, the level of brake fluid, or the frequency of the current radio station. System services publish these objects and modify their attributes; other programs can then subscribe to the objects and receive updates whenever the attributes change.

The PPS service is programming-language independent, allowing programs written in a variety of programming languages (C, C++, HTML5, Java, JavaScript, etc.) to intercommunicate, without any special knowledge of one another. Thus, an app in a high-level environment like HTML5 can easily access services provided by a device driver or other low-level service written in C or C++.

I’m only touching on the capabilities of PPS. To learn more, check out the QNX documentation on this service.

The platform layer

The platform layer includes the QNX OS and the board support packages, or BSPs, that allow the OS to run on various hardware platforms.

|

| An inherently modular and extensible architecture |

As for the QNX Neutrino OS, you could write a book about it — which is another way of saying it’s far beyond the scope of this post. Suffice it to say that its modularity, extensibility, reliability, and performance set the tone for the entire QNX CAR Platform. To get a feel for what the QNX OS brings to the platform (and by extension, to the automotive industry), I invite you to visit the QNX Neutrino OS page on the QNX website.

Labels:

Android,

Apps,

Connected Car,

Fast boot,

HMIs,

HTML5,

Infotainment,

Paul Leroux,

QNX CAR

A sweet ride? You’d better 'beleave' it

Is Autumn the best season for a long, leisurely Sunday drive? Well, I don’t know about your neck of the woods, but in my neck, the trees blaze like crimson, orange, and yellow candles, transfiguring back roads into cathedrals of pure color. When I see every leaf on every tree glow like a piece of sunlight-infused stained glass, I make a religious effort to jump behind the wheel and get out there!

Now, of course, you can enjoy your Autumn drive in any car worth its keep. But some cars make the ride sweeter than others — and the Mercedes S Class Coupe, with its QNX-powered infotainment system and instrument cluster, is deliciously caloric.

This isn’t a car for the prim, the proper, the austere. It’s for pure pleasure – whether you take pleasure in performance, luxury, or beauty of design. Or all three. The perfect car, in other words, for an Autumn drive. Which is exactly what the folks at Mercedes thought. In fact, they made a photo essay about — check it out on their Facebook page.

Source: Mercedes

Now, of course, you can enjoy your Autumn drive in any car worth its keep. But some cars make the ride sweeter than others — and the Mercedes S Class Coupe, with its QNX-powered infotainment system and instrument cluster, is deliciously caloric.

This isn’t a car for the prim, the proper, the austere. It’s for pure pleasure – whether you take pleasure in performance, luxury, or beauty of design. Or all three. The perfect car, in other words, for an Autumn drive. Which is exactly what the folks at Mercedes thought. In fact, they made a photo essay about — check it out on their Facebook page.

Source: Mercedes

Labels:

Infotainment,

Mercedes,

Paul Leroux,

QNX CAR,

QNX OS

Attending SAE Convergence? Here’s why you should visit booth 513

Cars and beer don’t mix. But discussing cars while having a beer? Now you’re talking. If you’re attending SAE Convergence next week, you owe it to yourself to register for our “Spirits And Eats” event at 7:00 pm Tuesday. It’s the perfect occasion to kick back and enjoy the company of people who, like yourself, are passionate about cars and car electronics. And it isn’t a bad networking opportunity either — you’ll meet folks from a variety of automakers, Tier 1s, and technology suppliers in a relaxed, convivial atmosphere.

But you know what? It isn’t just about the beer. Or the company. It’s also about the Benz. Our digitally modded Mercedes-Benz CLA45 AMG, to be exact. It’s the latest QNX technology concept car, and it’s the perfect vehicle (pun fully intended) for demonstrating how QNX technology can enable next-generation infotainment systems. Highlights include:

Here, for example, is the digital cluster:

And here is a closeup of the head unit:

And here’s a shot of the cluster and head unit together:

As for the engine sound enhancement and high-quality hands-free audio, I can’t reproduce these here — you’ll have come see the car and experience them first hand. (Yup, that's an invite.)

If you like what you see, and are interested in what you can hear, visit us at booth #513. And if you'd like to schedule a demo or reserve some time with a QNX representative in advance, we can accommodate that, too. Just send us an email.

But you know what? It isn’t just about the beer. Or the company. It’s also about the Benz. Our digitally modded Mercedes-Benz CLA45 AMG, to be exact. It’s the latest QNX technology concept car, and it’s the perfect vehicle (pun fully intended) for demonstrating how QNX technology can enable next-generation infotainment systems. Highlights include:

- A multi-modal user experience that blends touch, voice, and physical controls

- A secure application environment for Android, HTML5, and OpenGL ES

- Smartphone connectivity options for projecting smartphone apps onto the head unit

- A dynamically reconfigurable digital instrument cluster that displays turn-by-turn directions, notifications of incoming phone calls, and video from front and rear cameras

- Multimedia framework for playback of content from USB sticks, DLNA devices, etc.

- Full-band stereo calling — think phone calls with CD quality audio

- Engine sound enhancement that synchronizes synthesized engine sounds with engine RPM

Here, for example, is the digital cluster:

And here is a closeup of the head unit:

And here’s a shot of the cluster and head unit together:

As for the engine sound enhancement and high-quality hands-free audio, I can’t reproduce these here — you’ll have come see the car and experience them first hand. (Yup, that's an invite.)

If you like what you see, and are interested in what you can hear, visit us at booth #513. And if you'd like to schedule a demo or reserve some time with a QNX representative in advance, we can accommodate that, too. Just send us an email.

Are you ready to stop micromanaging your car?

I will get to the above question. Honest. But before I do, allow me to pose another one: When autonomous cars go mainstream, will anyone even notice?

The answer to this question depends on how you define the term. If you mean completely and absolutely autonomous, with no need for a steering wheel, gas pedal, or brake pedal, then yes, most people will notice. But long before these devices stop being built into cars, another phenomenon will occur: people will stop using them.

Allow me to rewind. Last week, Tesla announced that its Model S will soon be able to “steer to stay within a lane, change lanes with the simple tap of a turn signal, and manage speed by reading road signs and using traffic-aware cruise control.” I say soon because these functions won't be activated until owners download a software update in the coming weeks. But man, what an update.

Tesla may now be at the front of the ADAS wave, but the wave was already forming — and growing. Increasingly, cars are taking over mundane or hard-to-perform tasks, and they will only become better at them as time goes on. Whether it’s autonomous braking, automatic parking, hill-descent control, adaptive cruise control, or, in the case of the Tesla S, intelligent speed adaptation, cars will do more of the driving and, in so doing, socialize us into trusting them with even more driving tasks.

In other words, the next car you buy will prepare you for not having to drive the car after that.

You know what’s funny? At some point, the computers in cars will probably become safer drivers than humans. The humans will know it, but they will still clamor for steering wheels, brake pedals, and all the other traditional accoutrements of driving. Because people like control. Or, at the very least, the feeling that control is there if you want it.

It’s like cameras. I would never think of buying a camera that didn’t have full manual mode. Because control! But guess what: I almost never turn the mode selector to M. More often than not, it’s set to Program or Aperture Priority, because both of these semi-automated modes are good enough, and both allow me to focus on taking the picture, not on micromanaging my camera.

What about you? Are you ready for a car that needs a little less micromanagement?

The answer to this question depends on how you define the term. If you mean completely and absolutely autonomous, with no need for a steering wheel, gas pedal, or brake pedal, then yes, most people will notice. But long before these devices stop being built into cars, another phenomenon will occur: people will stop using them.

Allow me to rewind. Last week, Tesla announced that its Model S will soon be able to “steer to stay within a lane, change lanes with the simple tap of a turn signal, and manage speed by reading road signs and using traffic-aware cruise control.” I say soon because these functions won't be activated until owners download a software update in the coming weeks. But man, what an update.

Tesla may now be at the front of the ADAS wave, but the wave was already forming — and growing. Increasingly, cars are taking over mundane or hard-to-perform tasks, and they will only become better at them as time goes on. Whether it’s autonomous braking, automatic parking, hill-descent control, adaptive cruise control, or, in the case of the Tesla S, intelligent speed adaptation, cars will do more of the driving and, in so doing, socialize us into trusting them with even more driving tasks.

|

| Tesla Model S: soon with autopilot |

You know what’s funny? At some point, the computers in cars will probably become safer drivers than humans. The humans will know it, but they will still clamor for steering wheels, brake pedals, and all the other traditional accoutrements of driving. Because people like control. Or, at the very least, the feeling that control is there if you want it.

It’s like cameras. I would never think of buying a camera that didn’t have full manual mode. Because control! But guess what: I almost never turn the mode selector to M. More often than not, it’s set to Program or Aperture Priority, because both of these semi-automated modes are good enough, and both allow me to focus on taking the picture, not on micromanaging my camera.

What about you? Are you ready for a car that needs a little less micromanagement?

Labels:

ADAS,

Autonomous cars,

Paul Leroux

A question of concurrency

The first of a new series on the QNX CAR Platform for Infotainment. In this installment, I tackle the a priori question: why does the auto industry need this platform, anyway?

Define your terms, counseled Voltaire, and in keeping with his advice, allow me to begin with the following:

Con•cur•ren•cy \kən-kûr'-ən-sē\ n (1597) Cooperation, as of agents, circumstances, or events; agreement or union in action.

A good definition, as far as it goes. But it doesn’t go far enough for the purposes of this discussion. Wikipedia comes closer to the mark:

“In computer science, concurrency is a property of systems in which several computations execute simultaneously, and potentially interact with each other.”

That’s better, but it still falls short. However, the Wikipedia entry also states that:

“the base goals of concurrent programming include correctness, performance and robustness. Concurrent systems… are generally designed to operate indefinitely, including automatic recovery from failure, and not terminate unexpectedly.”

Now that’s more like it. Concurrency in computer systems isn’t simply a matter of doing several things all at once; it’s also a matter of delivering a solid user experience. The system must always be available and it must always be responsive: no “surprises” allowed.

This definition seems tailored-made for in-car infotainment systems. Here, for example, are some of the tasks that an infotainment system may perform:

The primary user of an infotainment system is the driver. So, despite juggling all these activities, an infotainment system must never show the strain. It must always respond quickly to user input and critical events, even when many activities compete for system resources. Otherwise, the driver will become annoyed or, worse, distracted. The passengers won’t be happy, either.

Still, that isn’t enough. Automakers also need to differentiate themselves, and infotainment serves as a key tool for achieving differentiation. So the infotainment system must not simply perform well; it must also allow the vehicle, or line of vehicles, to project the unique values, features, and brand identity of the automaker.

And even that isn’t enough. Most automakers offer multiple vehicle lines, each encompassing a variety of configurations and trim levels. So an infotainment design must also be scalable; that way, the work and investment made at the high end can be leveraged in mid-range and economy models. Because ROI.

But you know what? That still isn’t enough. An infotainment system design must also be flexible. It must, for example, support new functionality through software updates, whether such updates are installed through a storage device or over the air. And it must have the ability to accommodate quickly evolving connectivity protocols, app environments, and hardware platforms. All with the least possible fuss.

The nitty and the gritty

Concurrency, performance, reliability, differentiation, scalability, flexibility — a tall order. But it’s exactly the order that the QNX CAR Platform for Infotainment was designed to fill.

Take, for example, product differentiation. If you look at the QNX-powered infotainment systems that automakers are shipping today, one thing becomes obvious: they aren’t cookie-cutter systems. Rather, they each project the unique values, features, and brand identity of each automaker — even though they are all built on the same, standards-based platform.

So how does the QNX CAR Platform enable all this? That’s exactly what my colleagues and I will explore over the coming weeks and months. We’ll get into the nitty and sometimes the gritty of how the platform works and why it offers so much value to companies that develop infotainment systems in various shapes, forms, and price points.

Stay tuned.

POSTSCRIPT: Read the next installment of the QNX CAR Platform series, A question of architecture.

Define your terms, counseled Voltaire, and in keeping with his advice, allow me to begin with the following:

A good definition, as far as it goes. But it doesn’t go far enough for the purposes of this discussion. Wikipedia comes closer to the mark:

That’s better, but it still falls short. However, the Wikipedia entry also states that:

Now that’s more like it. Concurrency in computer systems isn’t simply a matter of doing several things all at once; it’s also a matter of delivering a solid user experience. The system must always be available and it must always be responsive: no “surprises” allowed.

This definition seems tailored-made for in-car infotainment systems. Here, for example, are some of the tasks that an infotainment system may perform:

- Run a variety of user applications, from 3D navigation to Internet radio, based on a mix of technologies, including Qt, HTML5, Android, and OpenGL ES

- Manage multiple forms of input: voice, touch, physical buttons, etc.

- Support multiple smartphone connectivity protocols such as MirrorLink and Apple CarPlay

- Perform services that smartphones cannot support, including:

- HVAC control

- discovery and playback of multimedia from USB sticks, DLNA devices, MTP devices, and other sources

- retrieval and display of fuel levels, tire pressure, and other vehicle information

- connectivity to Bluetooth devices

- Process voice signals to ensure the best possible quality of phone-based hands-free systems — this in itself can involve many tasks, including echo and noise removal, dynamic noise shaping, speech enhancement, etc.

- Perform active noise control to eliminate unwanted engine “boom” noise

- Offer extremely fast bootup times; a backup camera, for example, must come up within a second or two to be useful

|

| Jugging multiple concurrent tasks |

Still, that isn’t enough. Automakers also need to differentiate themselves, and infotainment serves as a key tool for achieving differentiation. So the infotainment system must not simply perform well; it must also allow the vehicle, or line of vehicles, to project the unique values, features, and brand identity of the automaker.

And even that isn’t enough. Most automakers offer multiple vehicle lines, each encompassing a variety of configurations and trim levels. So an infotainment design must also be scalable; that way, the work and investment made at the high end can be leveraged in mid-range and economy models. Because ROI.

|

| Projecting a unique identity |

The nitty and the gritty

Concurrency, performance, reliability, differentiation, scalability, flexibility — a tall order. But it’s exactly the order that the QNX CAR Platform for Infotainment was designed to fill.

Take, for example, product differentiation. If you look at the QNX-powered infotainment systems that automakers are shipping today, one thing becomes obvious: they aren’t cookie-cutter systems. Rather, they each project the unique values, features, and brand identity of each automaker — even though they are all built on the same, standards-based platform.

So how does the QNX CAR Platform enable all this? That’s exactly what my colleagues and I will explore over the coming weeks and months. We’ll get into the nitty and sometimes the gritty of how the platform works and why it offers so much value to companies that develop infotainment systems in various shapes, forms, and price points.

Stay tuned.

POSTSCRIPT: Read the next installment of the QNX CAR Platform series, A question of architecture.

Labels:

Acoustic processing,

Android,

Apps,

Connected Car,

Fast boot,

HMIs,

HTML5,

Infotainment,

Paul Leroux,

QNX CAR

A glaring look at rear-view mirrors

Some reflections on the challenge of looking backwards, followed by the vexing question: where, exactly, should video from a backup camera be displayed?

Mirror, mirror, above the dash, stop the glare and make it last! Okay, maybe I've been watching too many Netflix reruns of Bewitched. But mirror glare, typically caused by bright headlights, is a problem — and a dangerous one. It can create temporary blind spots on your retina, leaving you unable to see cars or pedestrians on the road around you.

Automotive manufacturers have offered solutions to this problem for decades. For instance, many car mirrors now employ electrochromism, which allows the mirror to dim automatically in response to headlights and other light sources. But when, exactly, did the first anti-glare mirrors come to market?

According to Wikipedia, the first manual-tilt day/night mirrors appeared in the 1930s. These mirrors typically use a prismatic, wedge-shaped design in which the rear surface (which is silvered) and the front surface (which is plain glass) are at angles to each other. In day view, you see light reflected off the silvered rear surface. But when you tilt the mirror to night view, you see light reflected off the unsilvered front surface, which, of course, has less glare.

Manual-tilt day/night mirrors may have debuted in the 30s, but they were still a novelty in the 50s. Witness this article from the September 1950 issue of Popular Science:

True to their name, manual-tilt mirrors require manual intervention: You have to take your hand off the wheel to adjust them, after you’ve been blinded by glare. Which is why, as early as 1958, Chrysler was demonstrating mirrors that could tilt automatically, as shown in this article from the October 1958 issue of Mechanix Illustrated:

Images: Modern Mechanix blog

Fast-forward to backup cameras

Electrochromic mirrors, which darken electronically, have done away with the need to tilt, either manually or automatically. But despite their sophistication, they still can't overcome the inherent drawbacks of rear-view mirrors, which provide only a partial view of the area behind the vehicle — a limitation that contributes to backover accidents, many of them involving small children. Which is why NHTSA has mandated the use of backup cameras by 2018 and why the last two QNX technology concept cars have shown how video from backup cameras can be integrated with other content in a digital instrument cluster.

Actually, this raises the question: just where should backup video be displayed? In the cluster, as demonstrated in our concept cars? Or in the head unit, the rear-view mirror, or a dedicated screen? The NHTSA ruling doesn’t mandate a specific device or location, which isn't surprising, as each has its own advantages and disadvantages.

Consider, for example, ease of use: Will drivers find one location more intuitive and less distracting than the alternatives? In all likelihood, the answer will vary from driver to driver and will depend on individual cognitive styles, driving habits, and vehicle design.

Another issue is speed of response. According to NHTSA’s ruling, any device displaying backup video must do so within 2.5 seconds of the car shifting into the reverse. Problem is, the ease of complying with this requirement depends on the device in question. For instance, NHTSA acknowledges that “in-mirror displays (which are only activated when the reverse gear is selected) may require additional warm-up time when compared to in-dash displays (which may be already in use for other purposes such as route navigation).”

At first blush, in-dash displays such as head units and digital clusters have the advantage here. But let’s remember that booting quickly can be a challenge for these systems because of their greater complexity — many offer a considerable amount of functionality. So imagine what happens when the driver turns the ignition key and almost immediately shifts into reverse. In that case, the cluster or head unit must boot up and display backup video within a handful of seconds. It's important, then, that system designers choose an OS that not only supports rich functionality, but also allows the system to start up and initialize applications in the least time possible.

Mirror, mirror, above the dash, stop the glare and make it last! Okay, maybe I've been watching too many Netflix reruns of Bewitched. But mirror glare, typically caused by bright headlights, is a problem — and a dangerous one. It can create temporary blind spots on your retina, leaving you unable to see cars or pedestrians on the road around you.

Automotive manufacturers have offered solutions to this problem for decades. For instance, many car mirrors now employ electrochromism, which allows the mirror to dim automatically in response to headlights and other light sources. But when, exactly, did the first anti-glare mirrors come to market?

According to Wikipedia, the first manual-tilt day/night mirrors appeared in the 1930s. These mirrors typically use a prismatic, wedge-shaped design in which the rear surface (which is silvered) and the front surface (which is plain glass) are at angles to each other. In day view, you see light reflected off the silvered rear surface. But when you tilt the mirror to night view, you see light reflected off the unsilvered front surface, which, of course, has less glare.

Manual-tilt day/night mirrors may have debuted in the 30s, but they were still a novelty in the 50s. Witness this article from the September 1950 issue of Popular Science: